Much of the focus on artificial intelligence has been on the impact that task automation will have on jobs. While PwC expects that the nature of jobs will change and that some will be susceptible to automation, their newest research, Sizing the prize, shows that AI-driven products and services will also generate significant economic value, offsetting job gains, as well as boosting productivity and average wage levels. Organisations still need to develop approaches to embed AI responsibly into our workplaces and to secure the right talent to make the most of the opportunities created.

Much of the focus on artificial intelligence has been on the impact that task automation will have on jobs. While PwC expects that the nature of jobs will change and that some will be susceptible to automation, their newest research, Sizing the prize, shows that AI-driven products and services will also generate significant economic value, offsetting job gains, as well as boosting productivity and average wage levels. Organisations still need to develop approaches to embed AI responsibly into our workplaces and to secure the right talent to make the most of the opportunities created.

MARGINALIA spoke with PwC’s AI Programme Leader, Rob McCargow (pictured right), to explore the key findings from their new report. McCargow is deeply involved in the IEEE Global Initiative for Ethical Considerations in Artificial Intelligence and Autonomous Systems, and so shares important ethical considerations and advice around how to maximise AI efforts in a way that benefit the enterprise, its people, and the society. He also describes how the technology has already changed some processes, and created new business models and strategies inside and outside of PwC.

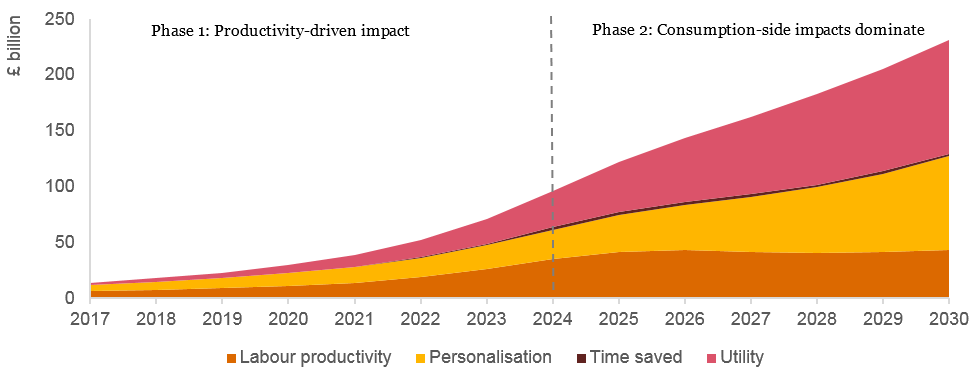

Gloria Lombardi: PwC’s Sizing the prize global AI report quantifies the total economic impact of artificial intelligence in the next 13 years. What will be the major economic gains?

Rob McCargow: By 2030, AI will offer significant financial benefits across the globe. The greatest economic gains from AI are likely to be in China with a potential boost to GDP of up to 26%, and North America (14.5% boost). The potential GDP boost is equivalent to a total of around $10 trillion in these two regions, which account for around two-thirds of the estimated total global economic impact of around $15.7 trillion.

Overall, the biggest gains will be in sectors such as retail, financial services, and healthcare, where AI will increase productivity, product value, and consumption. Three business areas with great potential are image-based diagnostics, on-demand production, and autonomous traffic control.

Within the UK we predict an additional 10.3% GDP growth, which is still a substantial number – it is the equivalent of an additional £232 billion. We project an increasing consumer demand, which will drive a greater choice of products, increased personalisation, and affordability over time.

So, the economic upside of the application of AI could be extraordinary, and we should keep in mind the important problems within society that AI will address. One risk, of course, is that the benefits are not spread across society and the globe equally, which poses challenging questions around the concentration of growth in just a few key economies.

GL: From an ethical perspective, what important considerations are surfacing? You have just mentioned the threat of inequality across the global economies.

RM: The ethical implications posed by autonomous decision making have already started surfacing. There have been a number of cases where poorly designed or poorly implemented AI systems have led to unintended consequences. For example, we saw the appearance of discriminatory chatbots and the flash crash in the financial services sector. In another case, an AI system was deployed to identify appropriately qualified candidates for highly remunerated jobs, which ended up prioritising men over women.

At the heart of those issues are a couple of key considerations. First of all, the algorithmic transparency, which is where some leading academics are currently focusing their research efforts on. It’s about ensuring that we fully understand how every step toward a decision is taken by autonomous systems and how machines get to certain outcomes. In some cases, with the most advanced AI systems, the developers cannot explain everything, owing to the complexities of the systems. If we cannot explain how an autonomous system has reached an answer, this poses significant risks in the enterprise, in particular in regulated businesses.

The other important ethical consideration is around the way AI is trained and developed. It starts with the curation and selection of big data sets. Using big data inherently risks feeding the AI with biases. If we use AI on biased data sets, it is then no surprise that bias and discrimination will amplify. The current workforce developing AI solutions is quite homogenous; there’s a significant gender imbalance. Many parts of the AI market have fewer than 15% women within the workforce. The risk is that the AI being built is representative only of a particular cohort of our society as opposed to the a whole.

GL: Given both the gains and the ethical considerations, how should organisations start approaching AI? How can they ensure the benefits to the business and to the society as a whole are enhanced, responsibly?

RM: When embarking upon an AI project it is critical to have a multi-disciplinary team of people involved. Of course, you need the deep technical skills, the machine learning expertise, and qualified data scientists. But in order to have a successful adoption of AI you also need to include other key disciplines such as HR – you have to be able to take people on the journey with you. You need to involve your communications specialists to explain the benefits that will be delivered and to demonstrate transparency around it. Clear communication is needed, that does not discourage people with jargon and science. You also need experts in data governance and regulation to ensure compliance. And the list goes on from there.

RM: When embarking upon an AI project it is critical to have a multi-disciplinary team of people involved. Of course, you need the deep technical skills, the machine learning expertise, and qualified data scientists. But in order to have a successful adoption of AI you also need to include other key disciplines such as HR – you have to be able to take people on the journey with you. You need to involve your communications specialists to explain the benefits that will be delivered and to demonstrate transparency around it. Clear communication is needed, that does not discourage people with jargon and science. You also need experts in data governance and regulation to ensure compliance. And the list goes on from there.

A multi-disciplinary team will allow you to bring a diverse set of opinions into the way the project is designed, rather than leaving it to the technologists alone. There’s the requirement to build trust in the adoption of AI. If people feel that they are participating in the development and deployment of this technology, then it will not feel like it is being done to them. People resist change that is forced upon them; for a successful integration of AI, people should be involved with every step of the journey.

You need to work hard to develop a diverse workforce, because this has a material impact on the quality of AI that you will produce.

You need to be able to look forward and make sure you are very clear about the future skills your workforces will need, and start acting now. For example, at PwC we partnered with the University of Birmingham and the University of Leeds to launch a Technology Degree Apprenticeship. It is a fully funded degree course, which lasts four years. Students work with us during the downtime of their studies and at the end they come out with a Computer Science Degree. This initiative addresses the gender imbalance within technology and the AI workforce by encouraging women to subscribe. If we look at it in the short term, yes, it will take a number of years for those individuals to graduate and join us, or any firm. But in the long term, it will significantly improve the talent pipeline, which mitigates the risks of having an homogeneous team developing our future AI systems.

GL: From an employer perspective, how will productivity improve as a consequence of using AI?

RM: To start with, AI provides powerful insights on the performance of an organisation and the productivity of its workforce. Organisations already generate large data sets, yet only a small proportion is meaningfully used.

Inside PwC, we’ve piloted a machine learning optimisation technique. We trained a machine learning platform using a significant amount of data about our workforce. We set the rules as per how we resource our projects and how we allocate work to staff. The technology can then significantly optimise how our staff work, not only in a commercial sense but also in ways that improves their well-being. For instance, why do we keep asking a colleague to fly from Scotland to Cornwall every week when we can reorder the way we schedule our staff to reduce travel time? This also benefits our environmental sustainability efforts in terms of car use and carbon footprint.

Inside PwC, we’ve piloted a machine learning optimisation technique. We trained a machine learning platform using a significant amount of data about our workforce. We set the rules as per how we resource our projects and how we allocate work to staff. The technology can then significantly optimise how our staff work, not only in a commercial sense but also in ways that improves their well-being. For instance, why do we keep asking a colleague to fly from Scotland to Cornwall every week when we can reorder the way we schedule our staff to reduce travel time? This also benefits our environmental sustainability efforts in terms of car use and carbon footprint.

In time, we will train the AI system to understand the aspirations of our staff, and come up with personalised learning and development opportunities.

GL: How do you ensure the responsible adoption of AI technology at PwC? Can you share any advice with MARGINALIA readers embarking on this fascinating, yet complex journey?

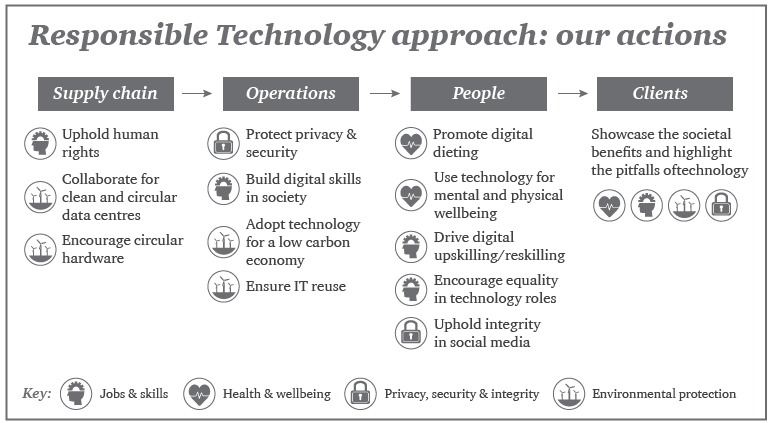

RM: Acknowledging the fact that AI technology will have unprecedented impact on business as well as the general public at large, we have developed a couple of important approaches. One is the Responsible Technology Approach. The charter sets out the way we adopt technology inside PwC as well as the way we spread best practice with our clients. We look at human rights, we explore the ways to protect privacy and security, and we discuss our duty of care to build digital skills across society as well as the up-skilling and re-skilling of our staff.

We also address the well-being of our employees in relation to the use of technology – the Digital Dieting [PDF; 1.5MB] programme is one popular method.

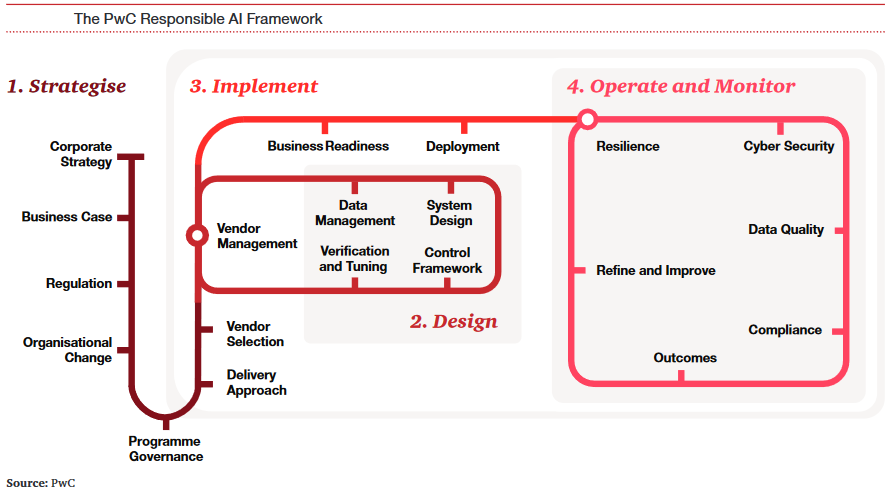

We have also launched the Responsible AI framework. We know there is significant commercial benefit to be gained from adopting AI technology, but our research suggests organisations have not yet started on this journey. We created this framework to allow them to benefit from the innovation, seeing AI as a force for good, but in a way that builds trust with their staff and other stakeholders. The framework ensures that enterprises are able to mitigate the risks and avoid the unintended consequences that we mentioned earlier. For example, in May 2018 the GDPR (General Data Protection Regulation) comes into force. If you combine the risks of a breach of GDPR with poorly adopted AI systems that produce autonomous decisions, an organisation can encounter severe material, commercial damages – the penalty for the breach of GDPR could be as high as 4% of the global annual turnover of an enterprise.

The Responsible AI framework also looks at the risks associated with cyber attacks, how to deploy the right strategy, select the right vendor, and ensure the right governance processes are in place, allowing a company to hardwire ethics into the deployment of AI.

AI will continue developing, becoming fully embedded into our businesses. It is therefore critical to address these foundational questions to accelerate innovation.

Pictures courtesy of PwC

[…] Article: Artificial Intelligence: economic gains, ethics and workforce wellbeing. […]